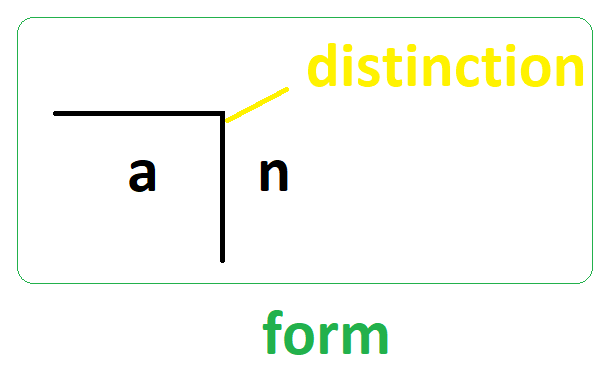

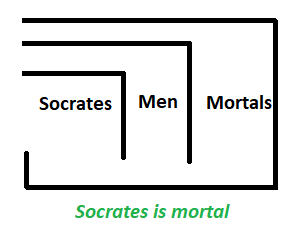

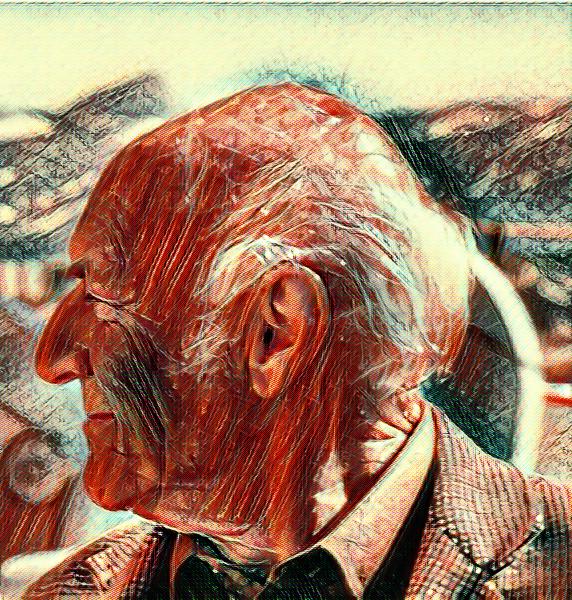

In today’s post, I am looking at the Socrates of Cybernetics, Heinz von Foerster’s ethical imperative:

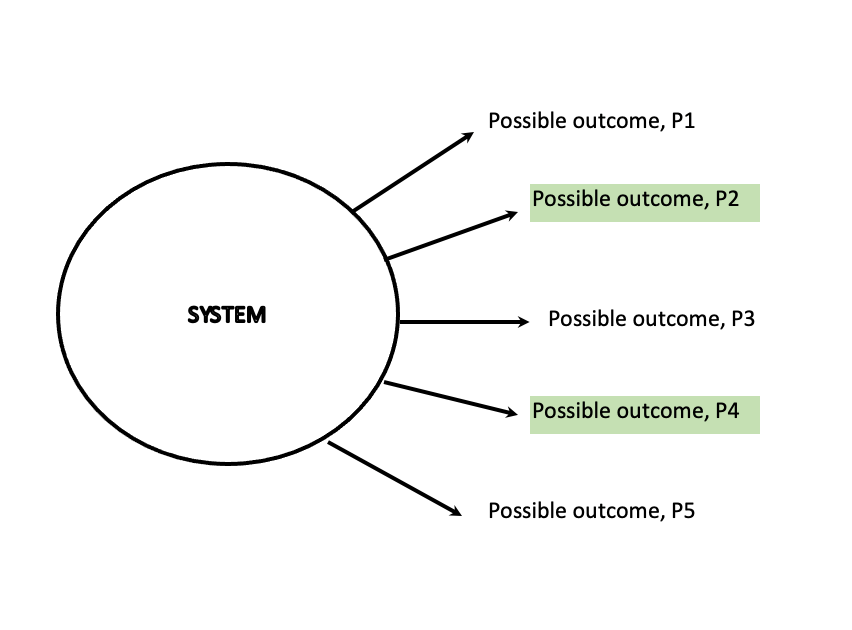

“Always act so as to increase the number of choices.”

I see this as the recursive humanist commandment. This is very much applicable to ethics, and how we should treat each other. Von forester said the following about ethics:

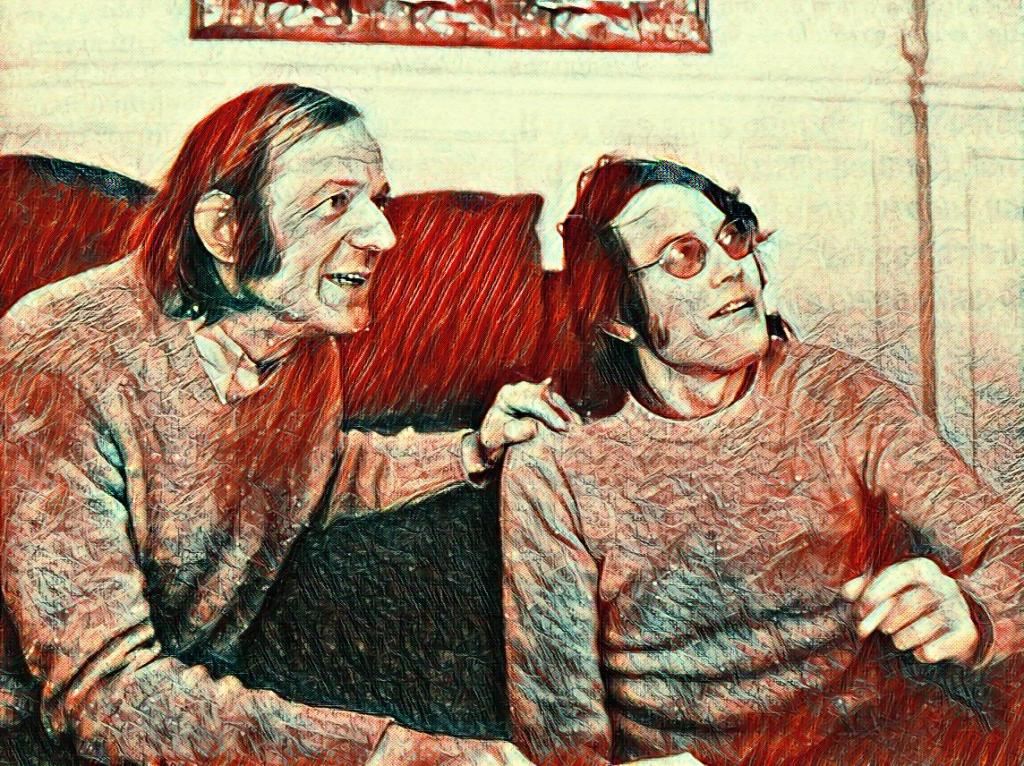

Whenever we speak about something that has to do with ethics, the other is involved. If I live alone in the jungle or in the desert, the problem of ethics does not exist. It only comes to exist through our being together. Only our togetherness, our being together, gives rise to the question, How do I behave toward the other so that we can really always be one?

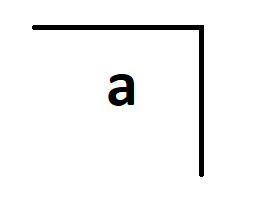

Von Foerster’s views align with that of constructivism, the idea that we construct our knowledge about our reality. We construct our knowledge to “re-cognize” a reality through the intercorrelation of the activities of the various sense organs. It is through these computed correlations that we recognize a reality. No findings exist independently of observers. Observing systems can only correlate their sense experiences with themselves and each other.

Paul Pangaro reminded me that von Foerster did not mean “options” or “possibilities”. Von Foerster specifically chose the word “choices”. By choices, he meant those selections among options that you might “actually take” depending on who “you are” right now. Here choices narrow down to the few that apply most to what you are now in this moment and in this context, down to a decision that makes you who you are. As von Foerster said, “Don’t make the decision, let the decision make you.” You and your choice you take are indistinguishable.

Since we are the ones doing the construction, we are also ultimately responsible for what we construct. No one should take this away from us. Ernst von Glasersfeld, father of radical constructivism explained this well:

The moment you begin to think that you are the author of your knowledge, you have to consider that you are responsible for it. You are responsible for what you are thinking, because it’s you who’s doing the thinking and you are responsible for what you have put together because it’s you who’s putting it all together. It’s a disagreeable idea and it has serious consequences, because it makes you truly responsible for everything you do. You can no longer say “well, that’s how the world is”, or “sono così”; you know, that’s not good enough.

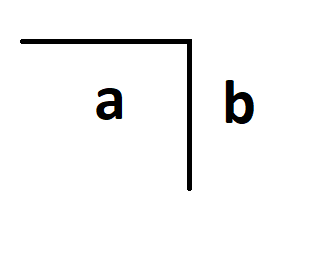

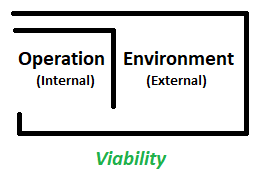

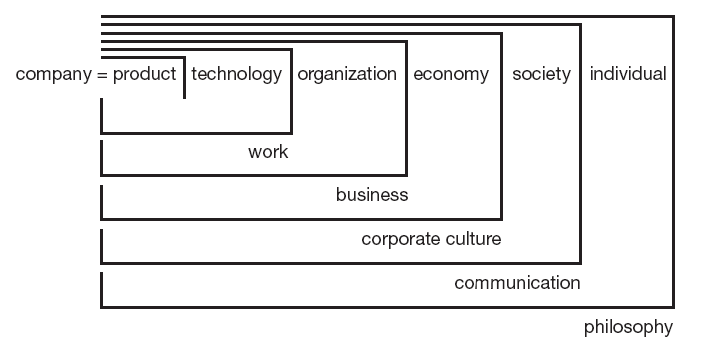

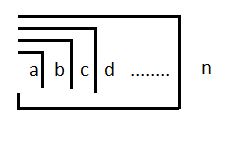

Cybernetics is about communication and control in the animal and machine, as Norbert Wiener viewed it. When we view control in terms of von Foerster’s ethical imperative, interesting thoughts come about. Control is about reducing the number of choices so that only certain pre-selected activities are available for the one being controlled. For example, a steersman has to control their ship such that it maintains a specific course, and here the ship’s “available options” to move are drastically reduced. When we use this view of control and apply it to human beings, we should do so in light of von Foerster’s ethical imperative.

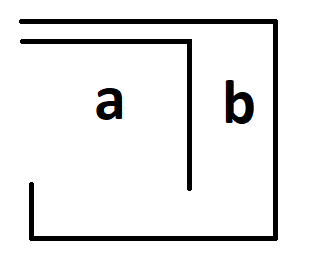

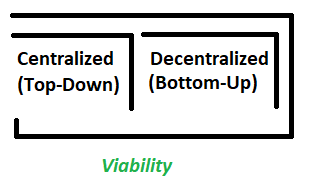

Von Foerster also said – A is better off when B is better off. This also provides further clarity on the recursiveness. If I am to make sure that I act so as to increase the number of choices for B, then B also in turn does the same. How I act impacts how others (re)act, which in turn impacts how I act back… on and on. This might remind the reader of the golden rule – Treat others as you would like others to treat you. However, this is missing the point about constructivism and the ongoing interaction that leads to the construction of a social reality. I see this as part of a social contract. As Jean-Jacques Rousseau noted, Man is born free, but everywhere he is in chains. The social contract comes about from the ongoing interactions and the contexts we are in with our fellow human beings as part of being in a society or social groups. This also means that this is dynamic and contingent in nature. What was “good” before may not be “good” today. This requires an ongoing framing and reframing though interactions.

John Boyd, father of OODA loop, shed more light on this:

Studies of human behavior reveal that the actions we undertake as individuals are closely related to survival, more importantly, survival on our own terms. Naturally, such a notion implies that we should be able to act relatively free or independent of any debilitating external influences — otherwise that very survival might be in jeopardy. In viewing the instinct for survival in this manner we imply that a basic aim or goal, as individuals, is to improve our capacity for independent action. The degree to which we cooperate, or compete, with others is driven by the need to satisfy this basic goal. If we believe that it is not possible to satisfy it alone, without help from others, history shows us that we will agree to constraints upon our independent action — in order to collectively pool skills and talents in the form of nations, corporations, labor unions, mafias, etc — so that obstacles standing in the way of the basic goal can either be removed or overcome. On the other hand, if the group cannot or does not attempt to overcome obstacles deemed important to many (or possibly any) of its individual members, the group must risk losing these alienated members. Under these circumstances, the alienated members may dissolve their relationship and remain independent, form a group of their own, or join another collective body in order to improve their capacity for independent action.

In a similar fashion, Dirk Baecker also noted the following:

Control means to establish causality ensured by communication. Control consists in reducing degrees of freedom in the self-selection of events. This is why the notion of “conditionality” is certainly one of the most important notions in the field of systems theory. Conditionality exists as soon as we introduce a distinction which separates subsets of possibilities and an observer who is forced to choose, yet who can only choose depending on the “product space” he is able to see. If we assume observers on both sides of the control relationship, we end up with subsets of possibilities selecting each other and thereby experiencing, and solving, the problem of “double contingency” so much cherished by sociologists. In other words, communication is needed to entice observers into a self-selection and into the reduction of degrees of freedom that goes with it. This means there must be a certain gain in the reduction of degrees of freedom, which for instance may be a greater certainty in the expectation of specific things happening or not happening.

Ultimately, this is all about what we value for ourselves and for the society we are part of. Our personal freedom makes sense only in light of other’s personal freedoms. That is the context – in relation to another human being, one who may be less fortunate than us. Making the world easier for those less fortunate than us makes the world better for everyone of us. I will finish with a great quote from one of my favorite science fiction character, Doctor Who:

“Human progress isn’t measured by industry. It’s measured by the value you place on a life. An unimportant life. A life without privilege. The boy who died on the river, that boy’s value is your value. That’s what defines an age. That’s what defines a species.”

Please maintain social distance, wear masks and take vaccination, if able. Stay safe and always keep on learning…

In case you missed it, my last post was The Constraint of Custom: