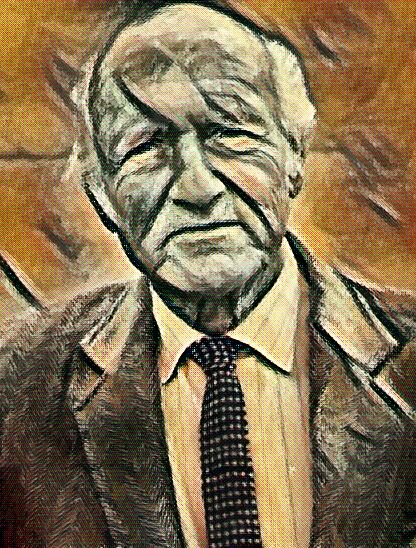

Today’s post is almost a follow-up to my earlier post – The Truth about True Models. In that post, I talked about Dr. Donald Hoffman’s idea of Fitness-Beats-Truth or FBT Theorem. Loosely put, the idea behind the FBT Theorem is that we have evolved to not have “true” perceptions of reality. We survived because we had “fitness” based models and because we did not have “true models”. In today’s post, I am continuing on this idea using the ideas from Heinz von Foerster, one of my Cybernetics heroes.

Heinz von Foerster came up with “the postulate of epistemic homeostasis”. This postulate states:

The nervous system as a whole is organized in such a way (organizes itself in such a way) that it computes a stable reality.

It is important to note here that, we are speaking about computing “a” reality and not “the” reality. Our nervous system is informationally closed (to follow up from the previous post). This means that we do not have direct access to the reality outside. All we have is what we can perceive through our perception framework. The famous philosopher, Immanuel Kant, referred to this as the noumena (the reality that we don’t have direct access to) and the phenomena (the perceived representation of the external reality). All we can do is to compute a reality based on our interpretive framework. This is just a version of the reality, and each one of us computes such a reality that is unique to each one of us.

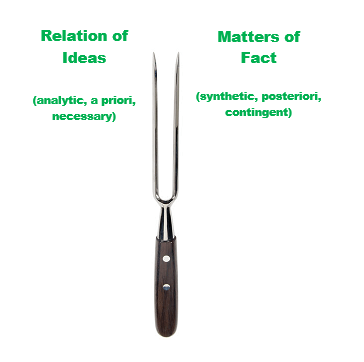

The other concept to make note of is the “stable” part of the stable reality. In Godelian* speak, our nervous system cares more about consistency than completeness. When we encounter a phenomenon, our nervous system looks at stable correlations from the past and present, and computes a sensation that confirms the perceived representation of the phenomenon. Von Foerster gives the example of a table. We can see the table, and we can touch it, and maybe bang on it. With each of these confirmations and correlations between the different sensory inputs, the table becomes more and more a “table” to us.

*Kurt Godel, one of the famous logicians of last century came up with the idea that any formal system able to do elementary arithmetic cannot be both complete and consistent; it is either incomplete or inconsistent.

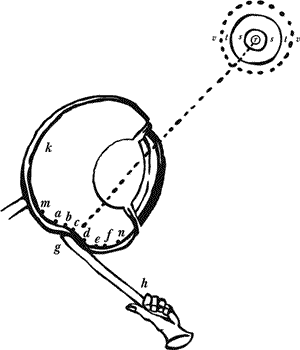

From the cybernetics standpoint, we are talking about an observer and the observed. The interaction between the observer and the observed is an act of computing a reality. The first step to computing a reality is making distinctions. If there are no distinctions, everything about the observed will be uniform, and no information can be processed by the observer. Thus, the first step is to make distinctions. The distinctions refer to the variety of the observed. The more distinctions there are, the more variety the observed has. From a second order cybernetics standpoint, the variety of the observed depends upon of the variety of the observer. This goes back to the unique stable reality computation point from earlier. Each one of us are unique in how we perceive things. This is our variety as the observer. The observed, that which is external to us, always has more potential variety than us. We cut down or attenuate this high variety by choosing certain attributes that interests us. Once the distinctions are made, we find relations between these distinctions to make sense of it all. This corresponds to the confirmations and correlations that we noted above in the example of a table.

We are able to survive in our environment because we are able to continuously compute a stable reality. The stability comes from the recursive computations of what is being observed. For example, lets go back to the example of the table. Our eyes receive the sensory input of the image of the table. This is a first set of computation. This sensory image then goes up the “neurochain”, where it is computed again. This happens again and again as the input gets “decoded” at each level, until it gets satisfactorily decoded by our nervous system. The final result is a computation of a computation of a computation of a computation and so on. The stability is achieved from this recursion.

The idea of a consistency over completeness is quite fascinating. This is mainly due to the limitation of our nervous system to have a true representation of the reality. There is a common belief that we live with uncertainty, but our nervous system strives to provide us a stable version of reality, one that is devoid of uncertainties. This is a fascinating idea. We are able to think about this only from a second order standpoint. We are able to ponder about our cognitive blind spots because we are able to do second order cybernetics. We are able to think about thinking. We are able to put ourselves into the observed. Second order cybernetics is the study of observing systems where the observer themselves are part of the observed system.

I will leave the reader with a final thought – the act of observing oneself is also a computation of “a” stable reality.

Please maintain social distance and wear masks. Stay safe and Always keep on learning…

In case you missed it, my last post was Wittgenstein and Autopoiesis: