Part 2: Boundary Critique and Condition Creation

Refer to my previous post here.

In today’s post, I am exploring following up on what leadership means when we recognize that organizations do not have purposes, but people do. If we cannot simply align everyone to an organizational purpose, what does it mean to lead? How do we create conditions where diverse human purposes can interact productively?

I am drawing on insights from Critical Systems Heuristics, second order cybernetics, and systems thinking. The ideas here continue from my previous post on organizational purpose.

Leaders as Condition Creators Within the System:

If organizations do not have purposes, what does leadership mean? I believe leaders are people who take up the responsibility to create conditions so that desired patterns of behavior and interaction emerge.

But here is the crucial point from second order cybernetics that I find fascinating. Leaders are not neutral architects standing outside the system. They are participants whose own purposes drive their condition-creating. When a leader decides what outcomes are desired, they make that determination based on their own purposefulness, their own constructed sense of what matters.

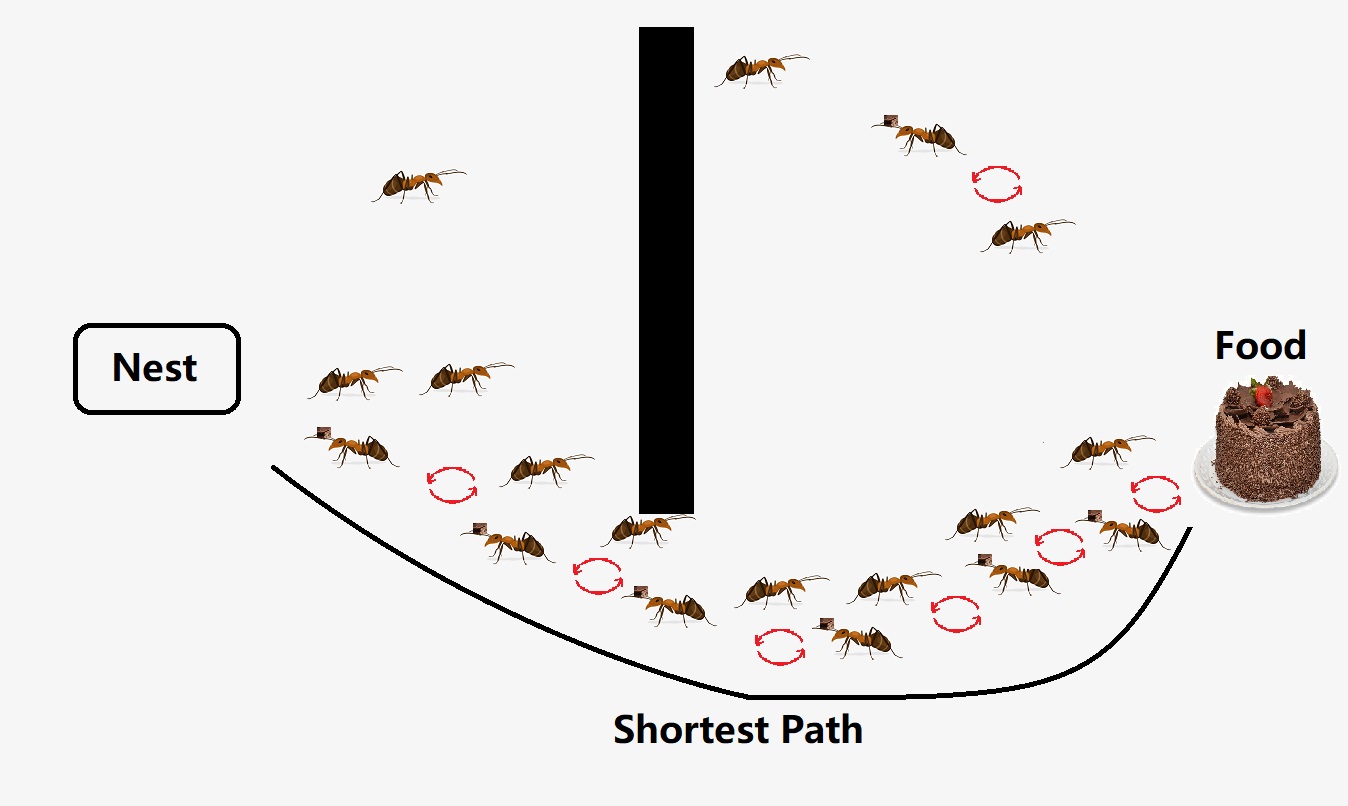

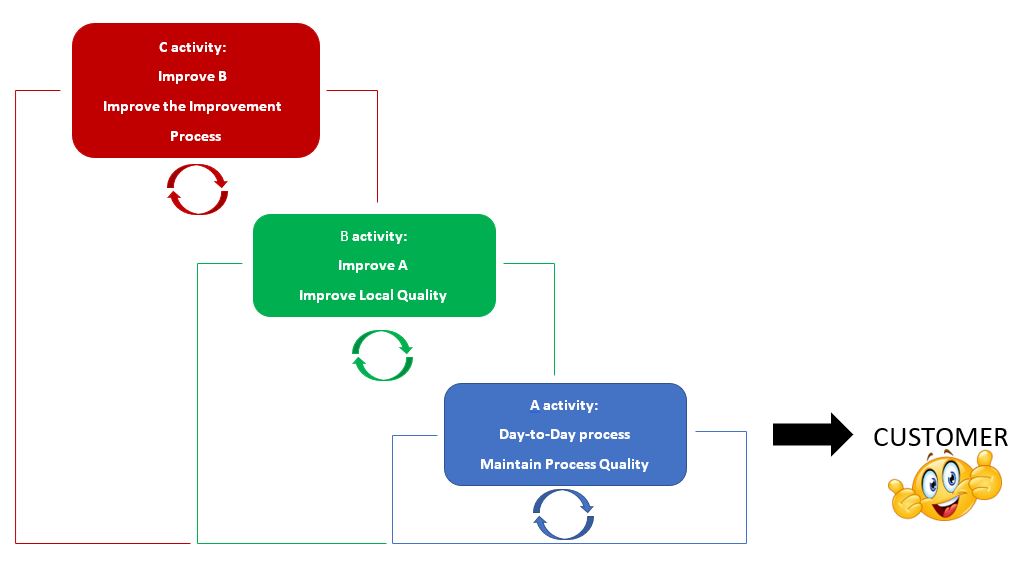

This creates recursive loops that traditional leadership thinking ignores. I picture this as a spiral of mutual influence. Leaders create conditions based on their purposes. These conditions interact with others’ purposes. The resulting patterns influence what the leader observes as working or failing. This changes the leader’s purposes and their condition-creation. The cycle continues.

I should note that this recursive leadership operates at multiple time scales. Leaders need to maintain day-to-day viability by preserving conditions that allow current purpose interactions to function. This is the frequent work of maintaining operational stability. But they must also monitor whether environmental changes threaten the essential variables that enable people to maintain their purposefulness and adaptive capacity.

When environmental shifts make current conditions unsustainable, leaders need to engage in what Ashby called ultrastable adaptation. For instance, when sudden regulatory changes undermine existing processes, stability requires maintaining day-to-day viability, but adaptation might mean restructuring the whole feedback system. The challenge is knowing when to maintain stability and when adaptation requires breaking and rebuilding the very conditions they have been protecting.

The leader is simultaneously observer and observed, designer and designed. Their responsibility does not come from some organizational mandate. It emerges from their own purposefulness and their relationships with other purposeful people in the system.

This raises critical ethical questions that I find compelling. Given that leaders’ individual purposes inevitably shape condition-creation, how do they prevent their strong personal purposes from overshadowing the genuine emergence of diverse patterns?

From a cybernetic constructivism standpoint, I believe the answer lies in the recursive nature of their role. As they create conditions for others to observe and influence the system, they must also create conditions for others to observe and influence their own condition-creating behavior. The leaders should engage in systematic practices for self-critique. They also need a means for regular feedback loops about how their condition-creating affects others’ viability. They need structured processes for others to question their boundary-drawing decisions.

Aiming for Betterment Through Boundary Critique:

Rather than imposing abstract organizational goals, I see leadership as creating conditions to maximize the viability and flourishing of as many participants as possible. This includes ensuring transparent and just processes for navigating inevitable trade-offs.

I acknowledge the reality that in complex systems with genuinely conflicting purposes, achieving betterment for absolutely everyone may be impossible. Some purposes can prove incompatible. Some trade-offs can disadvantage certain participants. Some conflicts may require difficult choices about whose viability takes priority in specific contexts.

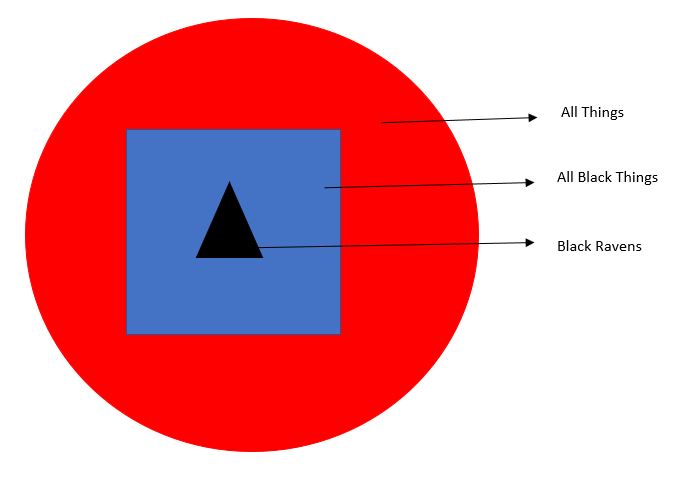

This is where Critical Systems Heuristics becomes essential. I believe the leader’s purpose becomes systematically questioning boundaries and stakeholder perspectives to prevent falling into benevolent paternalism. The focus turns to identifying who is not being served by current arrangements. Whose voices are not being heard? Who are the “losers in the game”?

Instead of “I will identify the losers and make their lives better”, the approach becomes “I will create conditions where people can identify when they are losing and have agency to change that”. This requires ongoing boundary critique. This might involve facilitated reflection sessions where excluded stakeholders name their concerns, or governance mechanisms where power asymmetries are explicitly surfaced.

Questions such as these become essential. Who ought to belong to the system of stakeholders? What ought to be the purpose of the system? Who is not being served by this system? Whose voices are not being heard? But these questions require systematic, repeated processes to prevent them from becoming empty rituals.

When purposes prove genuinely incompatible, I believe the leader’s role is not to force resolution but to create transparent processes for making trade-offs and supporting those whose purposes cannot be accommodated within the current system. This might involve restructuring teams. It might mean creating parallel tracks for different approaches. It could include helping people find more compatible contexts for their purposes, or providing transition support for those who need to leave.

Through this process, what we observe through POSIWID analysis becomes more aligned with supporting individual viability and collective flourishing. This is not because “the system” changes its behavior, but because the patterns of human interaction shift.

Purpose and Profit as Emergent Outcomes:

When conditions support individual recursive viability through ongoing boundary critique, when people can maintain their own purposefulness while engaging productively with others, the patterns of behavior often transcend simple profit maximization. Innovation, resilience, creativity, sustainability, and quality of life all emerge as natural expressions of viable recursive interactions. These become part of what we can observe through POSIWID analysis.

The profit motive does not disappear. It becomes one element in the larger emerging patterns of collective viability that arise from supporting individual viability. Profit becomes a signal that people are creating value that others want to exchange for. But through our refined POSIWID approach we can see it is a lagging indicator of the health of human interactions rather than the primary driver of behavioral patterns.

When we apply POSIWID to this approach, we can observe whether the conditions actually support individual viability and produce emergent collective benefit. Or do they just generate new forms of rhetoric while the same problematic patterns of interaction continue?

The question is not whether to choose profit or purpose. This is a false dichotomy. The question is this – How do we create conditions where human flourishing and value creation emerge together? How do we support people pursuing what matters to them in relationship with others, while systematically questioning who gets to define what flourishing and value mean?

Final Thoughts:

Leadership in this light requires epistemic humility and acceptance of pluralism. This approach exposes the myth of the benevolent paternalistic leader. The leader cannot be all knowing and all powerful. Leadership in complex human systems requires epistemic humility. No single person can understand all the purposes at play or predict how they will interact under different conditions.

Epistemic humility means acknowledging the limits of what any observer can know. When we recognize that our observations are shaped by our own purposes and position, we become more cautious about imposing our view of what is best for others. We focus instead on creating conditions where people can pursue their own definitions of flourishing while engaging constructively with others who have different purposes.

Acceptance of pluralism means recognizing that people legitimately hold different purposes and values. These differences are not problems to be solved but realities to be worked with. The art lies in creating conditions where diverse purposes can interact without requiring false unity or artificial harmony.

I find it meaningful that humans evolved as a species to rely on each other. As Heinz von Foerster observed, “A is better off when B is better off“. This insight from second-order cybernetics points toward creating conditions where mutual viability becomes possible. We should focus on building conditions where we can rely on each other rather than trying to control each other.

A wise leader focuses on minimizing harm first before maximizing benefits. In complex systems with genuinely conflicting purposes, I believe the first priority is ensuring that our condition-creating does not undermine the viability of those we claim to serve. Only then can we work toward enhancing collective capability.

When we work with the actual agency of actual people, guided by epistemic humility and acceptance of pluralism, we discover possibilities for organizing that honor both individual viability and collective capability.

Stay Curious, and Always Keep on Learning…