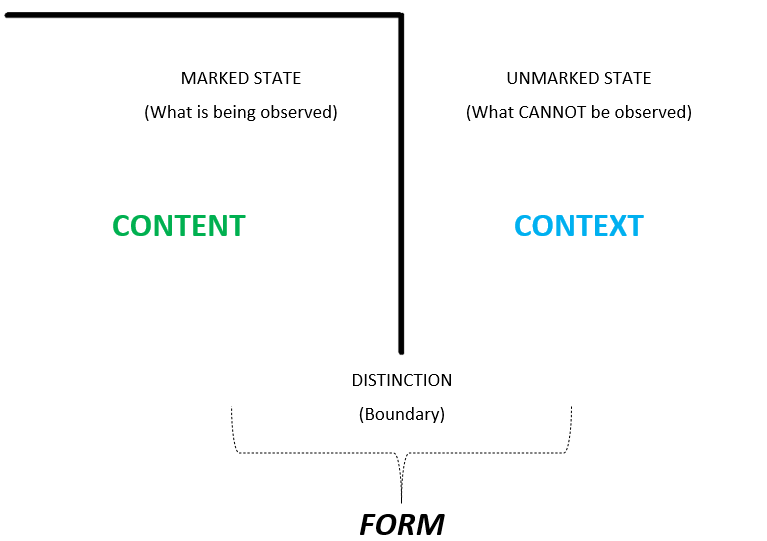

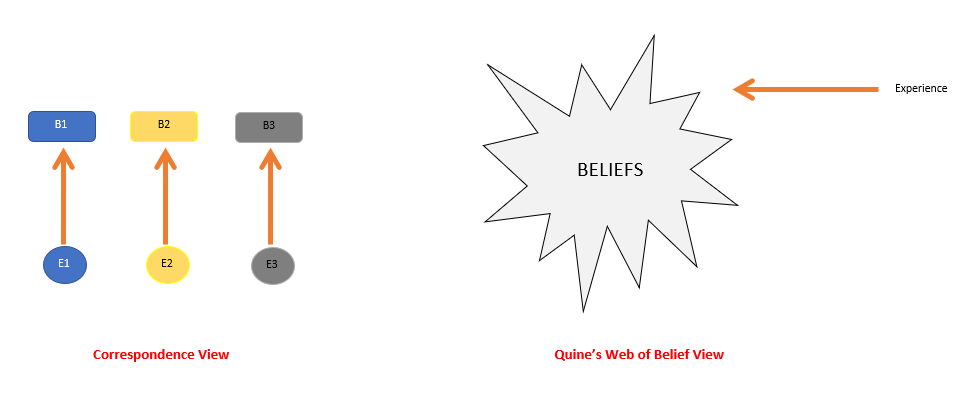

What can be studied is always a relationship or an infinite regress of relationships. Never a “thing.” – Gregory Bateson

In today’s post, I am continuing to look at the infatuation of blindly pursuing efficiencies. I am utilizing the thinking from the American sociologist Susan Leigh Star. Susan Leigh Star’s concept of invisible infrastructure focuses on the human and social dimensions of ‘systems’ that are often overlooked or undervalued when evaluating their efficiency. Star’s concept of “infrastructure” extends far beyond physical systems like roads or servers. In her framework, infrastructure is a sociotechnical web that includes both material and human elements, all of which are deeply embedded in organizational and social practices. Her key insight was that when things work, what makes them work remains invisible to us.

She wrote [1]:

People commonly envision infrastructure as a system of substrates—railroad lines, pipes and plumbing, electrical power plants, and wires. It is by definition invisible, part of the background for other kinds of work. It is ready-to-hand. This image holds up well enough for many purposes—turn on the faucet for a drink of water and you use a vast infrastructure of plumbing and water regulation without usually thinking much about it.

For Star, the idea of an infrastructure is a complex domain with several underlying attributes. She and her team identified the following attributes [1]:

Embeddedness: Infrastructure is fundamentally integrated within other structures, social arrangements, and technologies. These elements are so interconnected that it becomes difficult to separate the infrastructure from the social and organizational systems it supports.

Transparency: Infrastructure operates invisibly to support tasks without requiring rebuilding or reconfiguration. Expert users understand exactly what needs to be done, making the infrastructure transparent to them. For novices, however, the same infrastructure may appear opaque and challenging to navigate.

Reach and Scope: Infrastructure’s influence extends beyond specific tasks or locations, creating patterns that affect both spatial and temporal aspects of work. These broader impacts often remain subtle until explicitly examined.

Learned as Part of Membership: Users develop familiarity with infrastructure through ongoing participation in their communities. While newcomers might struggle initially, regular users develop an implicit understanding that allows them to work with the infrastructure naturally.

Links with Conventions of Practice: Infrastructure shapes community practices while simultaneously being shaped by them. The QWERTY keyboard exemplifies this relationship – its design constraints have influenced modern computing interfaces despite the original mechanical limitations no longer being relevant.

Embodiment of Standards: While infrastructure incorporates standardized practices, these standards vary across different communities and contexts. This variation reflects local adaptations and specific needs of different groups.

Built on an Installed Base: Infrastructure develops from existing systems, inheriting both their capabilities and their constraints. This inheritance affects how new capabilities can be implemented and integrated.

Becomes Visible Upon Breakdown: Infrastructure remains invisible during normal operation, becoming apparent only when it fails. This invisibility masks the complexity of interactions and dependencies until disruption occurs.

Fixed in Modular Increments: The notion that infrastructure can be fixed comprehensively or globally is problematic, as modifications must occur while maintaining existing operations.

Star highlighted that much of the labor that sustains infrastructure is hidden from view. This includes everyday tasks like troubleshooting, mentoring, and resolving problems that aren’t captured in traditional efficiency metrics. This “invisible labor” is essential for keeping systems running smoothly but is often unacknowledged until it breaks down. She noted that infrastructure is invisible when it works well, meaning people do not usually notice the networks or the labor involved unless something goes wrong. For example, employees who manage crises or adapt systems to unexpected challenges often go unnoticed, but when they are gone, the gaps they filled become painfully obvious.

Star illustrates this further through the example of nursing work in hospitals. When such work remains implicit, it becomes invisible – as one respondent noted, it is simply “thrown in with the price of the room.” However, once this work is made explicit and measurable, it becomes vulnerable to cost-cutting measures and efficiency metrics. This example demonstrates how the very act of making invisible work visible can threaten its existence, despite its crucial role in maintaining the infrastructure.

Human participants are what connect the elements of a network. The values or purposes come from the participants. Star noted that the infrastructure is embedded in social practices and human relationships. This means that the work of employees, how they interact with each other, share knowledge, or resolve conflicts, becomes part of the infrastructure itself. When organizations remove people to streamline operations, they erase these informal networks, which can undermine the functioning of the ‘system’.

Another key insight from Star was that the pursuit of mindless efficiencies can propagate deep social inequalities. Star emphasized that infrastructure is not neutral; it reflects power dynamics, values, and social structures. When efficiencies are pursued without recognizing the human labor behind them, it can perpetuate inequities and make the infrastructure less adaptable and more vulnerable to failure. Most often, layoffs that happen as part of the pursuit of efficiencies affect marginalized workers who help to keep the infrastructure invisible.

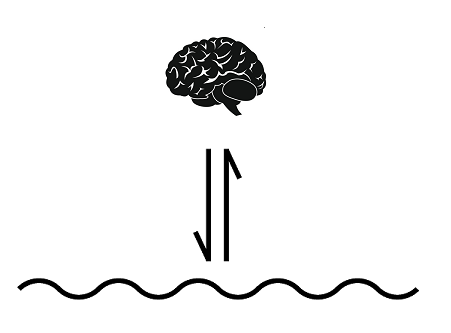

From a cybernetic perspective, maintaining viability requires some redundancy or capacity to achieve requisite variety. External variety is always orders of magnitude higher than internal variety. Most of the time, the policies or procedures set in place by the higher-ups are rigid and unable to meet this external variety. The management of variety in these situations is provided by the employees at those levels where the variety is being thrown at by the external world. These are not documented in any of the policies or procedures. This ignores how the real-world messiness is tackled on a daily basis by the employees. Cutting staff removes tacit knowledge and informal networks that are critical to keeping systems running, even if they are not formally acknowledged by management.

Efficiency assumes predictability, and this is not a luxury that organizations can afford. These are tagged by quantifiable metrics such as productivity quotas. These quantifiable metrics have a tendency to obfuscate the complexity of the networks.

Final Words:

The tendency to view infrastructure as merely technical ‘systems’ that can be optimized through efficiency metrics fundamentally misunderstands how complex ‘systems’ actually work. The invisible elements – human relationships, tacit knowledge, informal networks, and social practices – are not inefficiencies to be eliminated but rather critical components that enable ‘systems’ to adapt and survive in an unpredictable world.

When organizations pursue efficiency without recognizing these invisible dimensions, they risk damaging the very mechanisms that make their systems resilient. The human capacity to adapt, solve problems, and maintain relationships forms an essential infrastructure layer that formal processes and metrics cannot capture. This “invisible infrastructure” provides the flexibility and intelligence needed to handle real-world complexity. Removing this impacts the infrastructure’s ability to self-regulate. The error correction of error correction for a network lies within the tacit and social dimensions. This is a key aspect for making networks viable in the sea of complexity. We need to start framing resilience and redundancy as infrastructure investments, not inefficiencies. We need to start valuing the invisible.

I will finish with a thought-provoking quote from Star and Bowker:

But what are these categories? Who makes them, and who may change them? When and why do they become visible? How do they spread?…Remarkably for such a central part of our lives, we stand for the most part in formal ignorance of the social and moral order created by these invisible, potent entities.

References:

[1] The Ethnography of Infrastructure, Susan Leigh Star