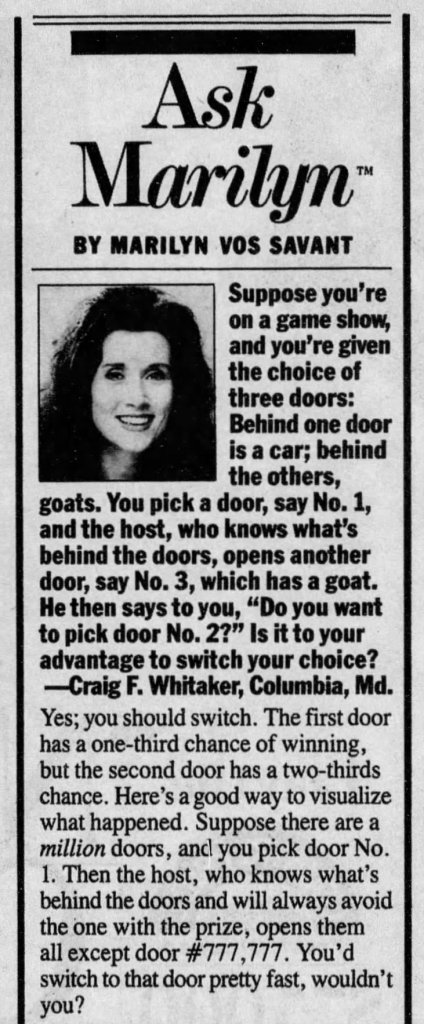

The Monty Hall problem has to be one of the most fascinating probability problems. The problem was first posed to Marilyn vos Savant in her column, “Ask Marilyn,” in Parade magazine:

Suppose you’re on a game show, and you’re given the choice of three doors: Behind one door is a car; behind the others, goats. You pick a door, say No. 1, and the host, who knows what’s behind the doors, opens another door, say No. 3, which has a goat. He then says to you, “Do you want to pick door No. 2?” Is it to your advantage to switch your choice?

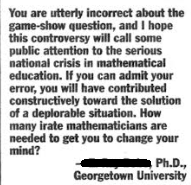

Her response was that the player should switch. This caused an uproar among her readers. Several readers, including PhDs in Mathematics, wrote back to her saying that she was absolutely wrong. One response read:

“You are utterly incorrect about the game-show question, and I hope this controversy will bring some public attention to the serious national crisis in mathematical education. If you can admit your error, you will have contributed constructively toward the solution of a deplorable situation. How many irate mathematicians are needed to get you to change your mind?”

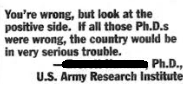

Another response said:

“You’re wrong, but look at the positive side. If all those Ph.D.’s were wrong, the country would be in very serious trouble.”

The intuition here is to focus on the two remaining choices and then assume that they are equally likely, therefore saying that the probability is 50% or ½. However, this is incorrect.

Let’s look at this in another way. If you have three doors (A, B, C), and there is a car behind one of the doors, the probability that you would choose that door at random is 1/3. Let’s say that you chose Door A. p(A) = 1/3

The probability that the car is behind one of the other two doors is (1/3 + 1/3) = 2/3. This can also be viewed as the car being behind door B or door C.

p(B) + p(C) = 2/3

Now, the host knows which door has the car. Therefore, the host can open the door without the car. Let’s say he opens door B and shows that the door does not contain a car (goat door). This means that once the host opens door B and shows it is empty, p(B) = 0. Therefore, p(C) is now 2/3. Thus, it would be logical for you to switch so that you can increase your probability from 1/3 to 2/3.

Here is another example to explain this. Let’s say that a “bad” magician shuffles a deck of playing cards, spreads the cards out, and asks you to pick the Ace of Spades from the spread-out cards. The cards are all facing down. You then focus on the cards and choose one at random, placing it in your pocket without looking. The probability that you chose the Ace of Spades is 1/52 (assuming there are 52 cards). The probability that the Ace of Spades is in the remainder of the deck is 51/52. Now the magician slowly turns over each card and shows that it is not the Ace of Spades. The magician is using a marked deck, so he knows the card by looking at the back. Finally, one card remains face down. Should you switch?

Of course, you should. The probability that the remaining card is the Ace of Spades is 51/52. Note that I started this problem by saying it is a “bad” magician. If it is a good magician, you should stick to your original choice.

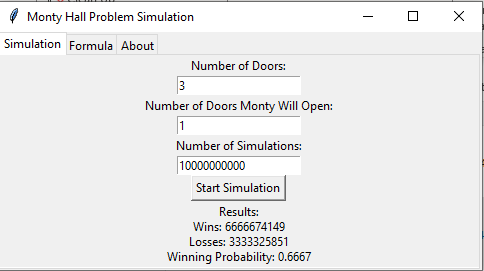

I have created a simulator program that the reader is welcome to play around with. This is an executable file and was coded in Python. Please do verify that there is no virus. I ran 10 billion simulations, and the end result was that the player won 0.6667 times when they switched. This aligns with the theoretical probability.

The ‘Monty’ from the problem is based on the TV host Monty Hall, who was the host for the game show, Let’s Make a Deal. He never did offer the player to switch the door. This formulation was from vos Savant’s reader. A version of this problem was originally posted by Martin Gardner, Three Prisoners Problem.

The Monty Hall problem can be generalized for N doors, where Monty opens M doors. The probability of winning by switching is given by the formula:

p(win by switching) = (N-1)/(N* (N-M-1))

Where:

N = total number of doors

M = number of doors Monty Opens

In the classic problem, we have 3 doors in total, and Monty opens 1 door.

p(win by switching) = (3-1)/(3*(3-1-1)) = 2/3

All probabilities are conditional probabilities:

Now let’s get back to the classic problem and say that Monty does not know which door has the car. This means that Monty is going to randomly open one of two doors. And further, let’s say that the door Monty opens does not contain a car. In the scenario, should the player switch?

In the scenario, the player is not going to gain by switching the door since the probability is a 1/2. What gives? Let’s look at this further:

- Initial Setup: As in the classic problem, the player has a 1/3 chance of initially picking the car and a 2/3 chance of picking a goat.

- Host’s Random Choice: Unlike the classic problem, the host doesn’t know what’s behind the doors. This is the information that is critical here.

- Possible Outcomes:

- If the player picked the car (1/3 chance), the host will always reveal a goat.

- If the player picked a goat (2/3 chance), there’s a 50% chance the host reveals the car (ending the game) and a 50% chance he reveals the other goat.

- Conditional Probability: We’re only considering the scenario where the game continues (i.e., a goat was revealed). This happens in two ways:

- The player picked the car (1/3 chance) and the host revealed a goat (100% chance given the player’s choice)

- The player picked a goat (2/3 chance) and the host revealed the other goat (50% chance given the player’s choice)

- Probability Calculation:

- P(car behind player’s door | goat revealed) = (1/3) / (1/3 + 1/3) = 1/2

- P(car behind other closed door | goat revealed) = (1/3) / (1/3 + 1/3) = 1/2

The key difference from the classic problem is that the host’s lack of knowledge introduces a possibility of the game ending early (if they reveal the car). This changes the conditional probabilities when we know the game has continued.

In essence, the host’s random choice acts as a filter that equalizes the probabilities. If a goat is revealed, it’s equally likely that it happened because the player initially chose the car or because the player chose a goat and got lucky with the host’s random choice.

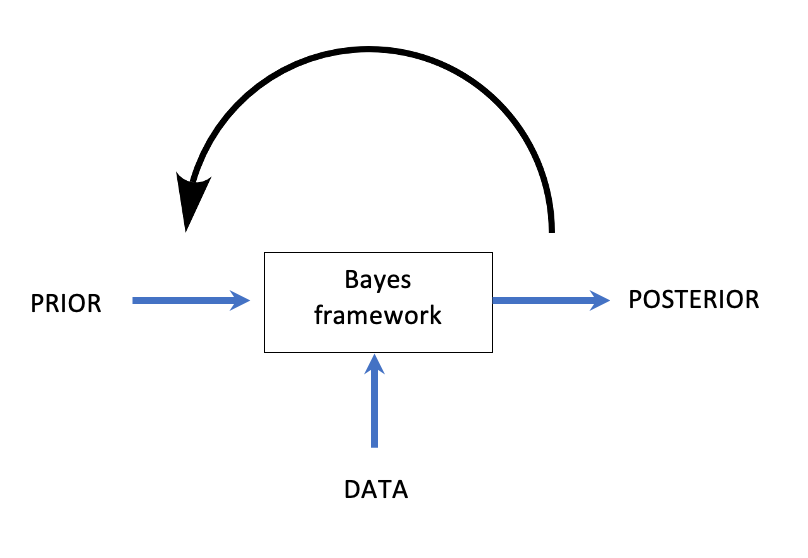

This scenario demonstrates how crucial the host’s knowledge and behavior are to the probabilities in the Monty Hall problem. This leads to the following core ideas of Bayesian statistics:

- All probabilities are conditional probabilities. It is always in the form of p(an event | the information we have on hand).

- In light of new information, we should update our prior probability.

Always keep on learning…