I borrow the term ‘dogma’ from W. V. Quine’s classic essay Two Dogmas of Empiricism, where he showed that unquestioned assumptions can quietly shape an entire field. Complexity science, too, rests on its own dogmas that deserve examination.

In today’s post, I want to explore what I see as two fundamental dogmas with how we think about complexity science. These dogmas are deeply embedded in our thinking, and they shape how we create tools, design interventions, and understand organizational life without us realizing it.

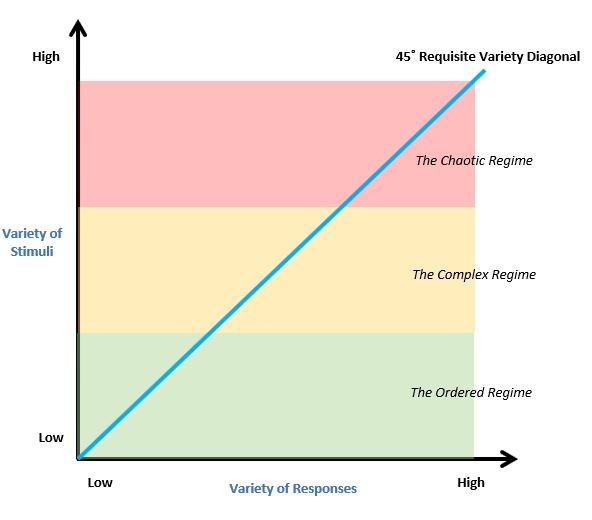

To explain these dogmas, let me use the chart of Ashby Space by Max Boisot and Bill McKelvey. It appears clean, scientific, and objective. The kind of visualization that makes the science feel rigorous and mathematical.

This framework comes from Ross Ashby’s Law of Requisite Variety. It maps organizational viability across different complexity regimes. It seems to offer clear insights. Systems in the ordered regime operate through routine procedures. Those in the complex regime require learning and adaptation. Those in the chaotic regime lose coherence when environmental variety exceeds their response capacity.

The 45° diagonal represents Ashby’s famous law. Only variety can absorb variety. Systems above this line face more environmental complexity than they can handle. Systems below it have excess capacity for response. From a conventional perspective, an organization might assess their position by measuring environmental turbulence against internal response capabilities. They might conclude they need to increase internal variety to match external complexity.

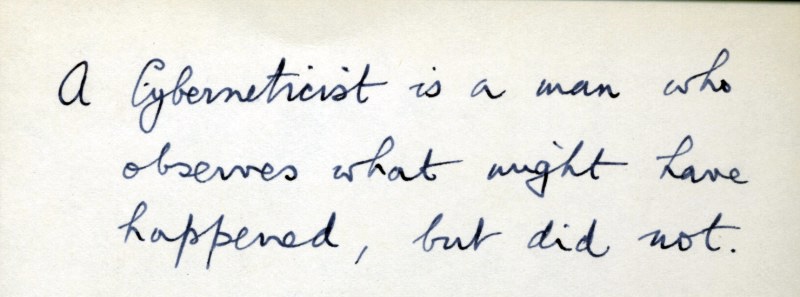

It is worth noting that Ashby himself understood variety as observer-dependent. His cybernetic work emphasized that distinctions are made by observers, not discovered in objective reality. The challenge arises when we operationalize such insights into frameworks and tools. What began as a nuanced understanding of observer-enacted variety becomes translated into seemingly measurable coordinates. This transformation from process to representation exemplifies the dogmas I want to examine.

This transformation reveals two fundamental dogmas that have shaped complexity science.

The First Dogma: Ontological Complexity Realism

The chart treats “variety of stimuli” as if it were an objective quantity that exists independently in the environment. It waits to be measured and plotted on the Y-axis. This reflects what I call ontological complexity realism. This is the belief that complexity is an intrinsic property of systems that exists regardless of who observes them.

Here lies the fundamental problem. Variety does not exist “out there” in any objective sense. What counts as variety depends entirely on the distinctions made by the observer or system. The environment does not contain variety. Variety emerges through the interaction between system and environment, mediated by the system’s capacity for making distinctions.

Let me give you a concrete example from healthcare. Is an emergency room “complex”? For a patient’s family member, the ER appears chaotic and overwhelming. Multiple alarms sound. Staff rush between rooms. Medical terminology flies around that they cannot understand. Life-and-death decisions happen at bewildering speed.

For an experienced ER physician, the same environment reveals familiar patterns. They recognize the rhythm of triage protocols. They understand the meaning behind different alarm sounds. They know the standard procedures that guide most interventions. The complexity is not inherent in the ER itself. It emerges from the coupling between the medical environment and each observer’s capacity for clinical distinction-making.

But this observer-dependence extends equally to the horizontal axis. What counts as “variety of responses” depends entirely on the distinctions the observer can make about available actions. The same ER situation reveals entirely different response repertoires to different observers.

The family member might see only binary options. Panic or wait helplessly. The nurse sees a rich array of possible interventions. The attending physician distinguishes even more nuanced response possibilities. The hospital administrator observes yet another set of responses. None of these response varieties exists independently in the situation. Each emerges from the specific capacity of the observer to make distinctions about what constitutes meaningful action.

John Dewey understood this when he argued that organism and environment must be understood as parts of a single transaction rather than separate things that interact. Traditional thinking assumes we have an organism “here” and an environment “there.” Then we study how they interact. But Dewey argues this separation is itself an artificial division that obscures the primary reality. The ongoing transaction between organism and environment creates experience itself.

The key insight is that stimulus and response are not external to each other. They are “always inside a coordination and have their significance purely from the part played in maintaining or reconstituting the coordination”. The stimulus is not something that happens to the organism from outside. It is something “to be discovered,” something “to be made out.” It is “the motor response which assists in discovering and constituting the stimulus.”

As Dewey puts it, “The stimulus is that phase of the forming coordination which represents the conditions which have to be met in bringing it to a successful issue. The response is that phase of one and the same forming coordination which gives the key to meeting these conditions”.

This transactional view transforms how we understand knowledge. Instead of a mind representing an external world, we have knowing as a mode of transaction between organism and environment. Knowledge emerges from this transaction rather than copying something pre-existing. This is not purely subjective nor purely objective, but relational.

Applied to complexity science, Dewey’s approach reveals why Ashby Space fails. The chart treats “variety of stimuli” and “variety of responses” as if they were separate, measurable quantities. But these are artificial divisions of the ongoing transaction between system and environment. There is no variety “out there” waiting to be counted. There are no responses “in here” waiting to be catalogued. There is only the ongoing transaction through which system and environment mutually specify each other.

The Second Dogma: Epistemological Representationalism

The chart presents itself as a neutral representation of complexity regimes. This embodies what I call epistemological representationalism. This is the belief that our task is to discover and measure pre-existing complexity through better methods and tools.

This dogma assumes we can create objective maps of complexity that correspond to how the world really is. The clean boundaries between regimes suggest we are mapping objective territory. The precise diagonal line suggests objective measurement. The measurable axes suggest neutral observation rather than conceptual construction.

But the moment you try to actually use this framework, its claims about objectivity break down. Where exactly would you locate a specific organization on these coordinates? How would you measure “variety of stimuli” independently of the system’s own distinction-making processes?

The chart cannot answer these questions because it treats as measurable quantities what are actually dynamic processes of distinction-making. It tries to map what can only be enacted.

Humberto Maturana and Francisco Varela’s work on structural coupling reveals why this approach fails. Living systems do not represent an independent environment. They enact their world through their structure and history of coupling. As Maturana put it, “everything said is said by an observer to an observer.” The boundaries we draw around “systems” and “environments” are distinctions made by observers, not features of an objective world waiting to be mapped.

The Fundamental Contradiction: Mapping the Unmappable

Here lies the deeper issue that cuts to the heart of what we mean by complexity itself. The very notion that complexity can be mapped contradicts the fundamental nature of what it means for something to be complex.

If something is indeed complex, it resists reduction to mappable coordinates. Complexity implies emergence, unpredictability, context-sensitivity, and observer-dependence. These are not accidental features that better measurement tools might eventually overcome. They are defining characteristics of complexity itself.

Yet the frameworks prevalent in complexity science attempt to do precisely what complexity theory tells us should be impossible. It tries to reduce emergent, context-dependent, observer-enacted phenomena to static, universal, objective coordinates. This creates a performative contradiction. We use the insights of complexity science to argue that phenomena are emergent and context-dependent. Then we immediately create tools that treat those same phenomena as mappable and context-independent.

The contradiction runs deeper still. If complexity truly emerges from the recursive coupling between observers and their domains of inquiry, then any attempt to create a universal map of complexity must necessarily fail. The observer drawing the map cannot step outside the epistemic coupling that generates the complexity in the first place.

Why These Dogmas Generate Persistent Puzzles

These two dogmas create persistent puzzles that are often ignored. The list below is not meant to be an exhaustive list at all.

The Expert-Novice Paradox Why do experts and novices see different levels of complexity in the same system? If complexity emerges from epistemic coupling, then of course they enact different complexities. They have different capacities for distinction-making.

The Measurement Tool Problem Why do different measurement tools reveal different complexities? If complexity is relational, then different tools necessarily enact different varieties by making different distinctions possible.

The Scaling Paradox Why does complexity seem to change when we shift between levels of analysis? Different levels of observation necessarily enact different complexities.

The Intervention Prediction Failure Why do interventions designed based on complexity mappings so often produce unexpected results? Because any intervention changes the observer-system relationship itself. This makes prediction inherently problematic.

These puzzles persist not because of inadequate methods. They persist because they are generated by the assumptions we bring to complexity science.

Beyond the Dogmas: Epistemic Coupling as Transaction

What if we abandoned these dogmas entirely? Instead of asking “How complex is this system?” we might ask this. “How does complexity emerge from the recursive interaction between this knowing system and its environment?”

This shifts focus from measuring pre-existing complexity to understanding epistemic coupling. The dynamic process through which systems and environments mutually specify each other through ongoing interaction. Complexity becomes not a property to be measured but a relationship to be understood.

This framework synthesizes insights from three traditions.

Dewey’s Transaction Theory Instead of separate entities that interact, we have organism-environment as a unified field. The “stimuli” and “responses” in Ashby Space are abstractions from this ongoing transaction.

Maturana and Varela’s Structural Coupling Living systems do not represent an environment but enact their world through their structure. The coupling between system and environment is the source of complexity.

Ashby’s Cybernetics Before the Law of Requisite Variety can even apply, an observer must create variety through distinction-making. The law cannot operate on raw reality. It requires an observer to carve up the world into meaningful categories.

This reinterpretation transforms Ashby’s contribution from a focus on objective regulatory mechanisms to an emphasis on the active and constitutive role of the knowing system in shaping the very “variety” it then seeks to regulate. Rather than discovering pre-existing variety that must be matched, systems participate in enacting the complexity they face through their own distinction-making capacities.

The Chart as Tool, Not Map

This does not mean frameworks like Ashby Space are useless. But we need to understand them differently. Not as maps of objective complexity regimes but as tools for thinking about epistemic coupling processes.

Used this way, the framework serves as what Wittgenstein called a ladder. Something we climb up to reach a new perspective, then kick away once we no longer need it. It helps us think more clearly about complexity without pretending to be complexity itself.

Final Words: Complexity as Participation

The chart looked so clean and objective at first. But complexity is messier, more relational, and more participatory than any representation can capture. That is not a limitation to be overcome. It is the very nature of what we are trying to understand.

Understanding complexity as epistemic coupling opens different possibilities. For designing systems that can remain coherent while staying open to surprise. For cultivating capacities for distinction-making that can expand as we encounter new varieties. For taking responsibility for the complexities we participate in creating.

Heinz von Foerster understood this when he formulated his ethical imperative. “Act always so as to increase the number of choices”. If we are responsible for constructing our realities through our distinctions, then we are also responsible for ensuring that others can participate in that construction.

The challenge is not to model the world but to participate in it more wisely. That participation depends fundamentally on understanding that complexity emerges from epistemic coupling. The recursive interaction between knowing systems and their domains of inquiry. This makes us responsible not just for our actions but for the worlds those actions help bring forth.

I will finish with wise words from Quine:

No statement is immune to revision.

Stay curious and Always Keep on Learning…