In today’s post, I want to revisit the notion of information from a cybernetic viewpoint, drawing primarily from Gregory Bateson’s well known formulation that information is the difference that makes a difference. This definition does not merely redefine information. It quietly displaces where information is assumed to reside and how it is assumed to function. This post is part of a series examining a cybernetic approach to tackling misinformation.

In everyday discourse, information is commonly treated as a thing. We speak of information being transmitted, stored, corrupted, lost, or controlled. This language suggests that information exists independently of those who encounter it, as if it were a commodity that can be packaged and delivered. Cybernetics has long resisted this framing, not by denying the existence of data in the form of signals or messages, but by insisting that information cannot be separated from the consequences it produces within a system.

Bateson’s phrasing forces a pause because it contains two differences, not one. These two differences are often collapsed into a single gesture, which obscures what cybernetics is trying to put more light on. To understand information cybernetically, these differences must be held apart and examined in relation to the observer, the context, and the viability of the system involved.

The first difference concerns distinguishability, or the ability to make distinctions. For a difference to exist as a difference, it must be generated or recognized by an observer. This does not mean that the world lacks structure or regularity. It means that distinctions do not announce themselves independently of the capacities and concerns of the cognitive observer encountering them. An observer must be able to draw a distinction for it to count as a difference at all.

This ability to distinguish is not abstract or universal. It is shaped by history, embodiment, training, and present need. In cybernetic terms, this is a question of variety. An observer with limited internal variety cannot register certain distinctions, regardless of how obvious they may appear to another observer. What fails to be noticed is a mismatch between the variety available and the variety required.

This immediately situates information within the notion of context. A difference that matters in one situation may be invisible or irrelevant in another. The same signal can be richly informative for one observer and entirely inert for another. From this perspective, the problem of information overload is often misdiagnosed. What overwhelms is not the quantity of differences but the absence of appropriate distinctions and filtering mechanisms within the observer.

The second difference concerns consequence. Not every distinction that can be made will matter. A difference becomes information only when it participates in altering the state, orientation, or activity of the cognizing “system”. This is where the second difference enters, the difference made by the difference.

Cybernetically, this is best understood in terms of viability. A difference matters when it bears upon the conditions under which a cognizing “system” continues to operate. It may support stability, signal threat, invite adaptation, or require reorganization. A distinction that does not affect viability may still be noticed, but it does not rise to the level of information in Bateson’s sense.

In a pragmatic turn, this reframing moves information away from correctness and toward consequence. It is not enough for a distinction to be accurate or well formed. It must matter in practice. Information is therefore tied directly to action potential, even when that action takes the form of restraint, delay, or reconsideration.

Between these two differences sits transduction. Whatever perturbation occurs in the environment does not arrive as meaning. It must be transformed through the structures of the observer. This transformation is neither passive nor optional. It is how a system turns disturbance into significance.

Transduction is deeply contextual and personal, without being arbitrary. It reflects the ways in which a system has learned to respond to its surroundings. Two observers may be perturbed by the same event, yet transduce it differently because their histories, expectations, and responsibilities differ. Meaning is not extracted from the world. It is enacted through ongoing structural coupling.

This is why information cannot be cleanly separated from the observer. What appears as the same input can lead to entirely different informational outcomes. To speak of information without speaking of transduction is to quietly reintroduce representational assumptions that cybernetics sought to set aside.

This leads naturally to the notion of informational closure. As Heinz von Foerster put it, the environment is as it is. It does not contain information waiting to be picked up. It contains events, regularities, and disturbances. Information arises only within operationally closed systems as a result of their internal changes in response to perturbation.

From this viewpoint, information is not transmitted. Signals may pass between systems, but information happens only when a system changes in a way that matters to it from the perturbation. What is stored are not information units but traces that may later participate in new acts of distinction. This undermines the idea of information as a substance that can be accumulated or depleted independently of the systems involved.

Human communication introduces an additional layer through language and social coordination. For a difference to make a difference in a social context, participants must be engaged in overlapping language games. Meaning does not reside in words alone but in shared practices, expectations, and forms of life.

Error correction, in this sense, does not occur in the signal but in interaction. A message is understood not because it is decoded correctly, but because the receiver anticipates what is likely to be meant and adjusts that anticipation through feedback. Reading a doctor’s cursive prescription is a familiar example. The pharmacist does not decipher letters in isolation. They draw upon knowledge of past interactions with the doctor, medications, dosages, and common medical practice. Understanding emerges from participation, not from transmission.

All of this brings us to a final consideration that is often neglected because it does not present itself as information at all. This is the question of slack. For a difference to make a difference, there must be sufficient room within the system for it to be taken up. This slack can appear in several forms. It may take the form of redundancy, where a distinction is encountered through multiple channels or repetitions. It may appear as amplification, where the manner of presentation gives the difference sufficient weight to register. It may also appear as relaxation time, where the system is afforded the temporal space to digest what has occurred.

Without some degree of slack, even meaningful distinctions fail to become information. When perturbations arrive faster than they can be transduced, the system does not become more informed. It becomes saturated. What follows is not heightened responsiveness but withdrawal. The system in many regards learns that responding no longer contributes to viability.

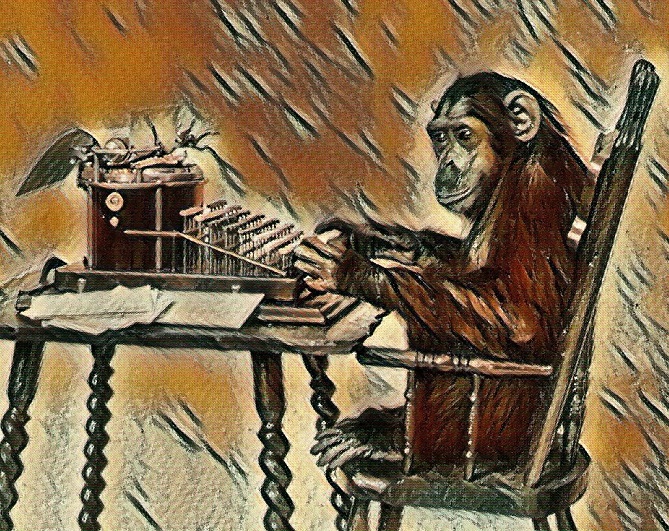

Relaxation time is particularly important in this regard. There was a period when news arrived with built in pauses. A morning paper or an evening broadcast created a rhythm that allowed distinctions to settle. Between these moments, there was time for discussion, reflection, and forgetting. That rhythm provided slack and maybe allowed for a more congenial political climate.

The continuous, twenty four hour cycle of today’s media, in which opinion often masquerades as news, has steadily eroded this condition and altered the political landscape in ways that reward polarization and immediacy. Nowadays, perturbations arrive without pause, and the responsibility for digestion has been shifted entirely onto the observer. The result is a familiar paradox. As reports of suffering increase, the capacity to respond meaningfully diminishes. Perturbations may accumulate, but few of them make a difference.

This is often described as complacency or moral failure. From a cybernetic viewpoint, it is more accurately described as a collapse of the conditions under which information can occur. The system is overwhelmed beyond its capacity to transduce, and indifference emerges as a protective response. This leads to the conditions for the medium to become the message.

Final Words:

If information is not a commodity, then neither is attention. Both depend on proportion, timing, and care. Environments that destroy slack while demanding responsiveness do not produce better informed observers. They erode the very capacities required for differences to make a difference.

Seen this way, the preservation of informational conditions is not merely a technical concern. It is an ethical one, bound up with how we design systems, share responsibility, and allow meaning the time and space it requires to emerge.

Stay curious and Always keep on learning…

If you liked what you have read, please consider my book “Second Order Cybernetics,” available in hard copy and e book formats. https://www.cyb3rsyn.com/products/soc-book