In today’s post, I am looking at a question that is rarely asked in management. What if the most responsible course of action is not to maximize benefit, but to minimize harm? In decision theory, this is expressed as the minimax principle. The idea is that one should minimize the worst possible outcome. In human systems, that outcome is best understood as harm to people, relationships, and the invisible infrastructure that sustains collective work.

The language of management is often dominated by the pursuit of gains. Leaders are taught to ask what is the best that can happen. They are told to optimize, to scale, and to seek advantage. The minimax principle turns this question around. It asks instead what is the worst that can happen and how do we prevent it. Every decision about maximization must be evaluated through the lens of minimizing harm. Harm minimization is not a boundary condition but the primary ethical directive that governs all other management decisions.

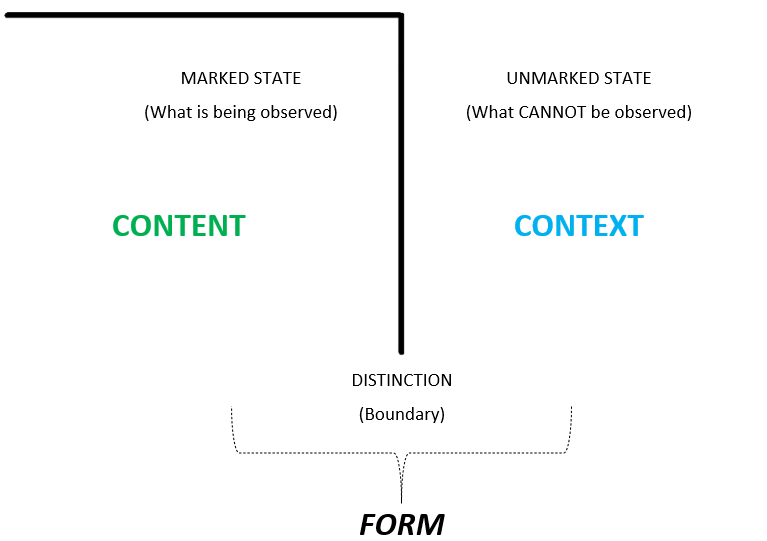

Russell Ackoff once observed that the more efficient you are at doing the wrong thing, the wronger you become. This statement captures the ethical inversion at the heart of many managerial failures. The pursuit of maximum gain often blinds organizations to the quiet forms of loss that accumulate in the background. Human systems depend on tacit networks of trust, communication, and mutual adjustment. When efficiency cuts too deeply, these invisible infrastructures collapse. The system loses its ability to adapt.

To minimize maximum harm is not to resist change. It is not an invitation to stand still. Rather, it is a recognition that progress and ethics operate according to different logics. Progress concerns improvement and expansion. Ethics concerns the protection of dignity, agency, and reversibility. Once we place harm minimization at the center of our decisions, progress becomes sustainable because it no longer depends on exploitation or exclusion.

The primary ethical directive to minimize harm requires a clear operational principle. Heinz von Foerster provided this principle with remarkable clarity- I shall act always so as to increase the number of choices. This is not a secondary value. This is how harm minimization is operationalized.

Consider what happens when choices are available. When options remain open, people retain the capacity to move in different directions. They can experiment, observe the results, and if those results prove harmful or undesirable, they can try a different direction. This is reversibility. It is not that decisions are undone but that people are not locked into a single path with no way out. Reversibility means the system retains the capacity to self-correct. This becomes an integral part of being viable.

When choices are removed, a different logic takes hold. A decision made under constraint, with no alternatives available, becomes irreversible. The person cannot change course because there is no other path to take. The harm accumulates and cannot be addressed through adaptation or choice. This is an important distinction. To minimize harm is to preserve the optionality that allows people to respond when things go wrong. When you increase the number of choices available to people, you prevent harm from becoming locked in place. You maintain the possibility of recovery. You keep open the horizon of possibilities. The person is not left to say I had no choice, which is the expression of the deepest form of harm, the harm from which there is no escape.

This means that every decision about maximization or progress must be evaluated through this lens. Does it increase or decrease the number of choices available to people? Does it preserve reversibility or does it close off futures? Does it prevent irreversible harm or does it create conditions from which recovery is impossible? This is how we operationalize the primary ethical directive in practice.

Werner Ulrich’s Critical Systems Heuristics extends this insight into a framework for reflective practice. Ulrich reminds us that every system boundary includes some and excludes others. Those excluded often bear the consequences of decisions without having had a voice in making them. Ethics therefore requires that we identify who loses in the system we design. Ethics requires that we act in ways that allow their participation and emancipation. To preserve choice is to protect those at the margins of decisions. It is to recognize that moral responsibility lies in how boundaries are drawn. When we ask who loses, we are asking a minimax question. We are asking what is the worst that can happen for those at the margins.

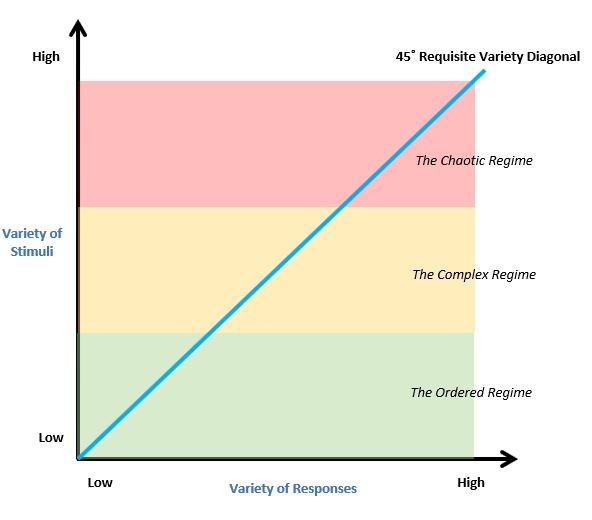

To some, the minimax principle might sound like a cautious philosophy, one that restrains progress. This would be a misunderstanding. The aim is not to prevent change but to cultivate conditions under which change can occur without catastrophic harm. Here the insights of Magoroh Maruyama are valuable. In his work on second cybernetics, he distinguished between negative feedback processes that regulate deviation and positive feedback processes that amplify it. He noted that deviation amplification is the essence of morphogenesis. Not all deviations are errors to be corrected. Some are the sources of new order and innovation. Ethical design therefore should not eliminate deviation but create conditions in which positive deviation can be generative without catastrophic harm. To minimize maximum harm is not the same as to minimize deviation. It is about preserving the space in which positive deviation can arise safely.

Von Foerster’s imperative and Maruyama’s insight converge here. Both point toward the idea that ethics in complex systems must not suppress variety. Von Foerster’s view was that more freedom comes with more responsibility. When we create systems that expand choice, we simultaneously increase the responsibility of those who act within them. The ethical task is not to eliminate risk but to manage it in a way that nurtures diversity and growth while protecting the conditions of future choice. To design ethically is to create the space in which deviation, learning, and emergence can unfold without irreversible harm.

Behind every visible structure of management lies an invisible infrastructure. It consists of relationships, trust, informal knowledge, and the tacit coordination that keeps work alive. This infrastructure is often taken for granted. It is noticed only when it breaks down. In the pursuit of efficiency, organizations frequently erode these invisible supports. Staff reductions, rigid procedures, and mechanistic control can destroy the very human capacities that enable adaptability and resilience. The question therefore is not what can be gained but what can be lost without recovery. True resilience depends on maintaining the conditions that allow the system to heal itself. When we ask this question, we are asking what choices we are removing from people. We are asking what futures we are closing off.

It is important to distinguish ethics from progress. Ethics does not belong to the domain of progress. Progress concerns the expansion of capability. Ethics concerns the preservation of humanity. The two may coexist, but they are not the same. Progress without ethical constraint risks creating conditions from which recovery is impossible. Ethics without openness to change risks paralysis. The minimax principle, interpreted through von Foerster and Ulrich, provides a way to hold both. It calls for action that reduces maximum harm while sustaining the capacity for continued evolution.

Maruyama’s perspective deepens this understanding. By allowing positive deviation, we cultivate the potential for new forms of order. By preserving choice, we protect against harm that would close the future. The task of management therefore is not to optimize the present but to sustain the possibility of better futures without destroying the diversity from which they may emerge.

Ackoff’s view was that the future is not something to be predicted but something to be designed. The ethical responsibility of design is to ensure that this future remains open. To minimize maximum harm is to recognize the fragility of what is human in our systems. To preserve choice is to keep open the horizon of possibility. To embrace positive deviation is to invite emergence without destruction. Ethics in management is not about perfection or certainty. It is about maintaining the delicate balance between care and change.

Final Words:

When compromises are inevitable in human systems, the most humane path is to protect what allows us to begin again. The minimax principle is an invitation to ask different questions in our organizations. It is an invitation to be aware of who loses in the systems we design. It is an invitation to increase the number of choices available to people. It is an invitation to preserve reversibility and to protect the invisible infrastructure that sustains our collective work. We are responsible for our construction of these systems. We are responsible for the futures we foreclose and the futures we keep open. To be an authentic manager is to be aware of this responsibility and to strive, always, to minimize the harm we might do while creating conditions for emergence and learning.

Stay curious and always keep on learning.