In today’s post, I am following up from my last post and looking further at the idea of constraints as proposed by Dr. Lila Gatlin. Gatlin was an American biophysicist, who used the idea of information theory to propose an information-processing aspect of life. In information theory, the ‘constraints’ are the ‘redundancies’ utilized for the transmission of the message. Gatlin’s use of this idea from an evolutionary standpoint is quite remarkable. I will explain the idea of redundancies in language using an example I have used before here. This is the famous idea that if a monkey had infinite time on its hands and a typewriter, it will at some point, type out the entire works of Shakespeare, just by randomly clicking on the typewriter keys. It is obviously highly unlikely that a monkey can actually do this. In fact, this was investigated further by William R. Bennett, Jr., a Yale professor of Engineering. As Jeremy Campbell, in his wonderful book, Grammatical Man, notes:

Bennett… using computers, has calculated that if a trillion monkeys were to type ten keys a second at random, it would take more thana trillion times as long as the universe has been in existence merely to produce the sentence “To be, or not to be: that is the question.”

This is mainly because the keyboard of a typewriter does not truly reflect the alphabet as they are used in English. The typewriter keyboard has only one key for each letter. This means that every letter has the same chance of being struck. From an information theory standpoint, this represents a maximum entropy scenario. Any letter can come next since they all have the same probability of being struck. In English, however, the distribution of letters is not the same. Some letters such as “E” are more likely to occur than say “Q”. This is a form of “redundancy” in language. Here redundancy refers to regularities, something that occurs on a regular basis. Gatlin referred to this redundancy as “D1”, which she described as divergence from equiprobability. Bennett used this redundancy next in his experiment. This will be like saying that some letters now had lot more keys on the typewriter so that they are more likely to be clicked. Campbell continues:

Bennett has shown that by applying certain quite simple rules of probability, so that the typewriter keys were not struck completely at random, imaginary monkeys could, in a matter of minutes, turn out passages which contain striking resemblances to lines from Shakespeare’s plays. He supplied his computers with the twenty-six letters of the alphabet, a space and an apostrophe. Then, using Act Three of Hamlet as his statistical model, Bennett wrote a program arranging for certain letters to appear more frequently than others, on the average, just as they do in the play, where the four most common letters are e, o, t, and a, and the four least common letters are j, n, q, and z. Given these instructions, the computer monkeys still wrote gibberish, but no it had a slight hint of structure.

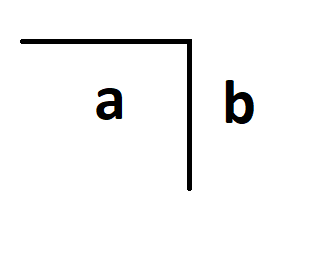

The next type of redundancy in English is the divergence from independence. In English, we know that certain letters are more likely to come together. For example, “ing” or “qu” or “ion”. If we see an “i” and “o”, then there is high chance that the next letter is going to be an “n”. If we see a “q”, we can be fairly sure that the next letter is going to be a “u”. The occurrence of one letter makes the occurrence of another letter highly likely. In other words, this type of redundancy makes the letter interdependent rather than independent. Gatlin referred to this as “D2”. Bennett utilized this redundancy for his experiment:

Next, Bennett programmed in some statistical rules about which letters are likely to appear at the beginning and end of words, and which pairs of letters, such as th, he, qu, and ex, are used most often. This improved the monkey’s copy somewhat, although it still fell short of the Bard’s standards. At this second stage of programming, a large number of indelicate words and expletives appeared, leading Bennett to suspect that one-syllable obscenities are among the most probable sequences of letters used in normal language. Swearing has a low information content! When Bennett then programmed the computer to take into account triplets of letters, in which the probability of one letter is affected by the two letters which come before it, half the words were correct English ones and the proportion of obscenities increased. At a fourth level of programming, where groups of four letters were considered, only 10 percent of the words produced were gibberish and one sentence, the fruit of an all-night computer run, bore a certain ghostly resemblance to Hamlet’s soliloquy:

TO DEA NOW NAT TO BE WILL AND THEM BE DOES

DOESORNS CALAWROUTOULD

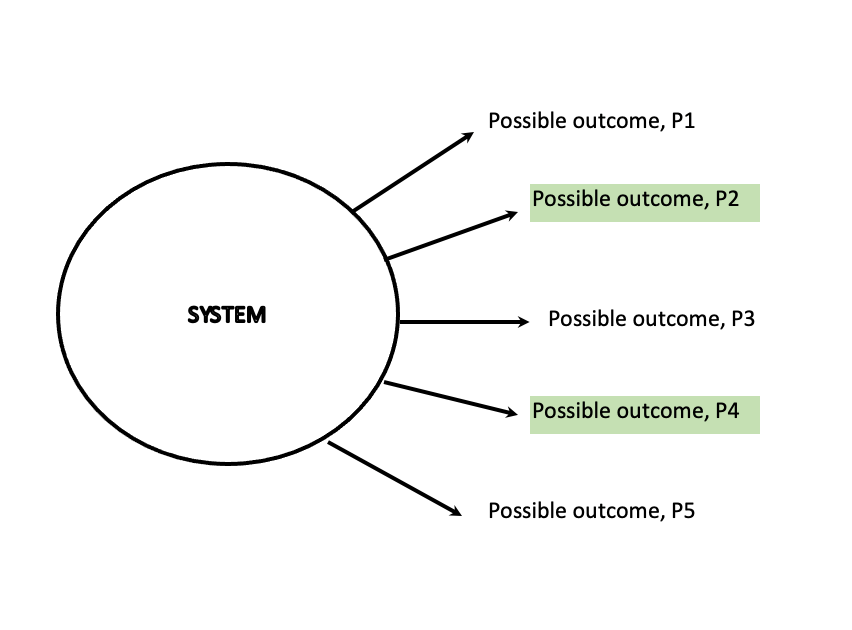

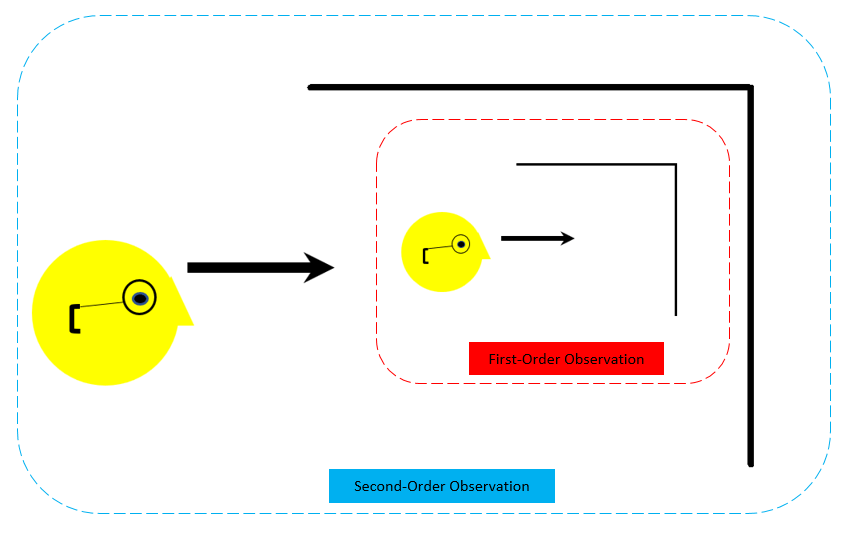

We can see that as Bennett’s experiment started using more and more redundancies found in English, a certain structure seems to emerge. With the use of redundancies, even though it might appear that the monkeys were free to choose any key, the program made it such that certain events were more likely to happen than others. This is the basic premise of constraints. Constraints make certain things more likely to happen than others. This is different than a cause-and-effect phenomenon like a billiard ball hitting another billiard ball. Gatlin’s brilliance was to use this analogy with evolution. She pondered why some species were able to evolve to be more complex than others. She concluded that this has to do with the two types of redundancies, D1 and D2. She considered the transmission of genetic material to be similar to how a message is transmitted from the source to the receiver. She determined that some species were able to evolve differently because they were able to use the two redundancies in an optimal fashion.

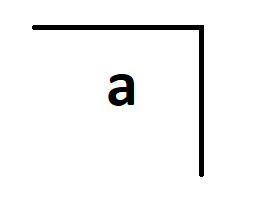

If we come back to the analogy with the language, and if we were to only use D1 redundancy, then we would have a very high success rate of repeating certain letters again and again. Eventually, the strings we would generate would become monotonous, without any variety. It would be something like EEEAAEEEAAAEEEO. Novelty is introduced when we utilize the second type of redundancy, D2. Using D2 introduces a more likelihood of emergence since there are more connections present. As Campbell explains the two redundancies further:

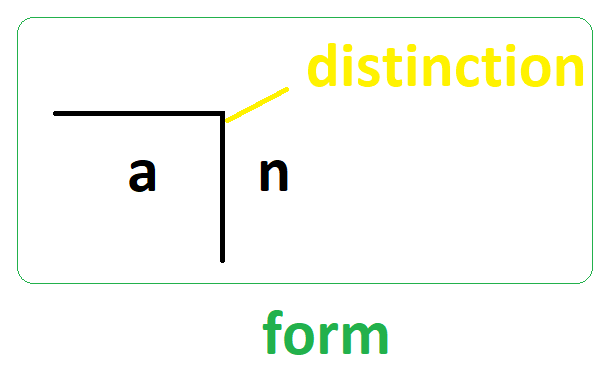

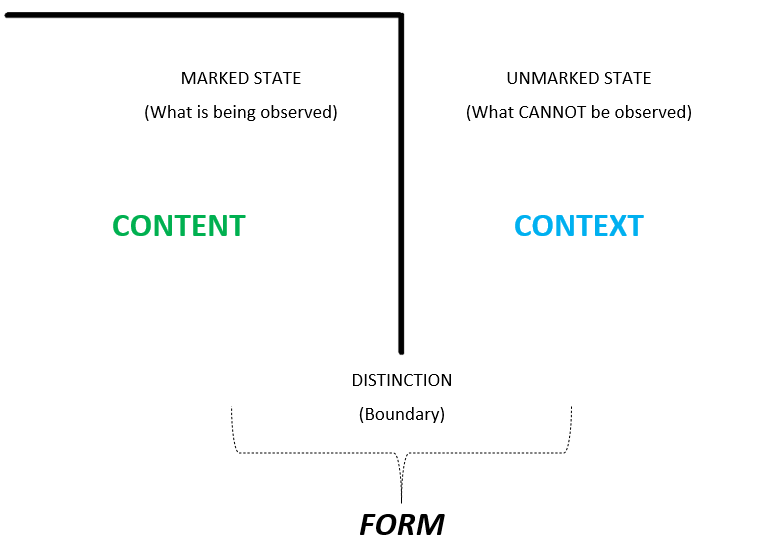

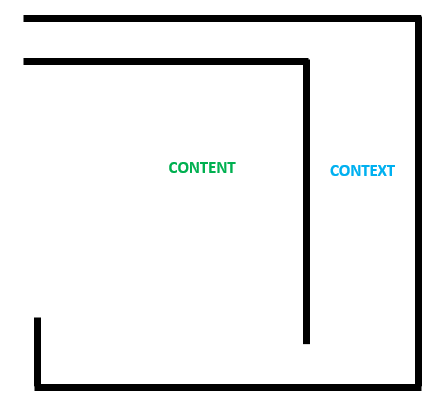

Both kinds lower the entropy, but not in the same way, and the distinction is a critical one. The first kind of redundancy, which she calls D1, is the statistical rule that some letters likely to appear more often than the others, on the average, in a passage of text. D1 which is context-free, measures the extent to which a sequence of symbols generated by a message source departs from the completely random state where each symbol is just as likely to appear as any other symbol. The second kind of redundancy, D2, which is context-sensitive, measures the extent to which the individual symbols have departed from a state of perfect independence from one another, departed from a state in which context does not exist. These two types of redundancy apply as much to a sequence of chemical bases strung out along a molecule of DNA as to the letters and words of a language.

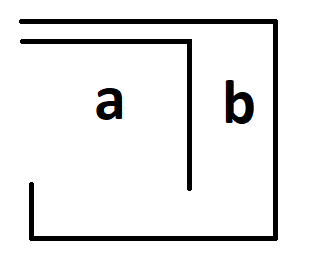

Campbell suggests that D2 is a richer version of redundancy because it permits greater variety, while at the same time controlling errors. Campbell also notes that Bennett had to utilize the D1 constraint as a constant, whereas he had to keep on increasing the D2 constraints to the limit of his equipment until he saw something roughly similar to sensible English. Using this analogy to evolution, Gatlin notes:

Let us assume that the first DNA molecules assembled in the primordial soup were random sequences, that is, D2 was zero, and possibly also D1. One of the primary requisites of a living system is that it reproduces itself accurately. If this reproduction is highly inaccurate, the system has not survived. Therefore, any device for increasing the fidelity of information processing would be extremely valuable in the emergence of living forms, particularly higher forms… Lower organisms first attempted to increase the fidelity of the genetic message by increasing redundancy primarily by increasing D1, the divergence from equiprobability of the symbols. This is a very unsuccessful and naive technique because as D1 increases, the potential message variety, the number of different words that can be formed per unit message length, declines. Gatlin determined that this was the reason why invertebrates remained “lower organisms”.

A much more sophisticated technique for increasing the accuracy of the genetic message without paying such a high price for it was first achieved by vertebrates. First, they fixed D1. This is a fundamental prerequisite to the formulation of any language, particularly more complex languages… The vertebrates were the first living organisms to achieve the stabilization of D1, thus laying the foundation for the formulation of a genetic language. Then they increased D2 at relatively constant D1. Hence, they increased the reliability of the genetic message-without loss of potential message variety. They achieved a reduction in error probability without paying too great a price for it… It is possible’ within limits to increase the fidelity of the genetic message without loss of potential message variety provided that the entropy variables change in just the right way, namely, by increasing D2 at relatively constant D1. This is what the vertebrates have done. This is why we are “higher” organisms.

Final Words:

I have always wondered about the exponential advancement of technology and how we as a species were able to achieve it. Gatlin’s ideas made me wonder if they are applicable to our species’ tremendous technological advancement. We started off with stone tools and now we are on the brink of visiting Mars. It is quite likely that we first came across a sharp stone and cut ourselves on it and then thought of using it for cutting things. From there, we realized that we could sharpen certain stones to get the same result. Gatlin puts forth that during the initial stages, it is extremely important that errors are kept to a minimum. We had to first get better at the stone tools before we could proceed to higher and more complex tools. The complexification happened when we were able to make connections – by increasing D2 redundancy. As Gatlin states – D2 endows the structure, The more tools and ideas we could connect, the faster and better we could invent new technologies. The exponentiality only came by when we were able to connect more things to each other.

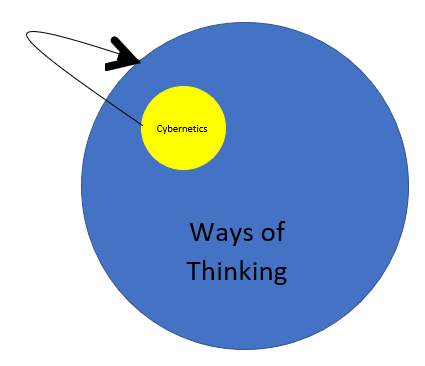

I was introduced to Gatlin’s ideas through Campbell and Alicia Juarrero. As far as I could tell, Gatlin did not use the terms “context-free” or “context-sensitive”. They seem to have been used by Campbell. Juarrero refers to “context-free constraints” as “governing constraints” and “context-sensitive constraints” as “enabling constraints”. I will be writing about these in a future post. I will finish with a neat observation about the ever-present redundancies in English language from Claude Shannon, the father of Information Theory.:

The redundancy of ordinary English, not considering statistical structure over greater distances than about eight letters, is roughly 50%. This means that when we write English half of what we write is determined by the structure of the language and half is chosen freely.

In other words, if you follow basic rules of English language, you could make sense at least 50% of what you have written, as long as you use short words!

Please maintain social distance, wear masks and take vaccination, if able. Stay safe and always keep on learning… In case you missed it, my last post was More Notes on Constraints in Cybernetics: